Blueshift Breaks Memory Wall in Data-Intensive Applications

British startup Blueshift Memory’s RISC-V memory controller core design addresses the von Neumann bottleneck in data-intensive applications like HPC and AI. Blueshift’s alternative to the widely used Harvard architecture, which it calls the Cambridge architecture, can accelerate CPU computation up to 50× and save 65% of the power consumption, depending on the exact nature of the workload.

“The basic problem is that data is being shuttled backwards and forwards totally unnecessarily because of the random nature of the memory,” Blueshift Memory CEO Helen Duncan told EE Times. “If you structure the data properly, then you know where the data is and you can make sure it’s there when you need it…in the right place at right time.”

Blueshift CTO and co-founder Peter Marosan was frustrated by the memory wall—sometimes called the von Neumann bottleneck—during his career in HPC.

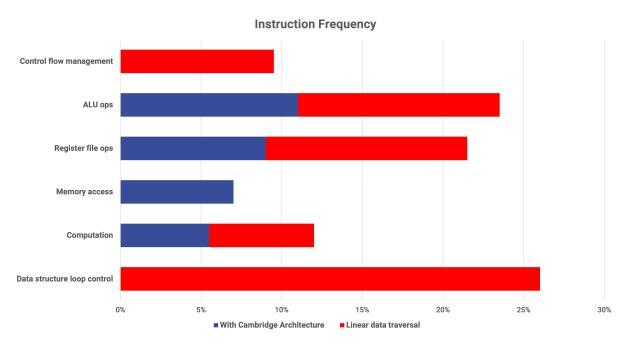

“The CPU is thinking about the data structure, the memory address calculation and its control flow—less than 15% [of instructions] are actually doing the calculation, all the rest is the CPU trying to understand the data structure and giving instructions for the memory to understand, because the memory is dumb,” Marosan told EE Times. “In [Blueshift’s] world, the CPU only does the computation part. Everything else runs in the memory, which restructures itself to store the data [in a specific way].”

Instruction frequency for linear data traversal can be reduced to the blue bars only, using Blueshift’s Cambridge architecture (Source: Blueshift Memory)

Today’s CPUs might use as much as 60-80% of their time calculating memory addresses, waiting for answers from memory, and doing loops that give structure to the data, he said. Addresses are not important—what is important is simply that the right data arrives at the right moment. Storing the data in the right way removes the need for addressing, Marosan said, adding that Blueshift’s architecture gives responsibility for data structure handling and understanding data traversal to the memory.

Making memory smarter makes communication between memory and CPU more efficient, and frees up CPU cycles. Savings will apply to all workloads, but the effect will be more pronounced for data-intensive tasks. AI, which is particularly data-intensive, can be sped up by a factor of 5, the company said.

Of course, the Cambridge architecture could also be used in combination with GPUs or AI accelerators, or anywhere the von Neumann bottleneck is a problem.

“GPUs are using HBM because they have a bottleneck,” Marosan said. “What we can deliver for GPU and AI companies is a much simpler memory structure around them. Maybe they have 10 or 20 memory banks around a calculation—we can deliver only one, with the same speed.”

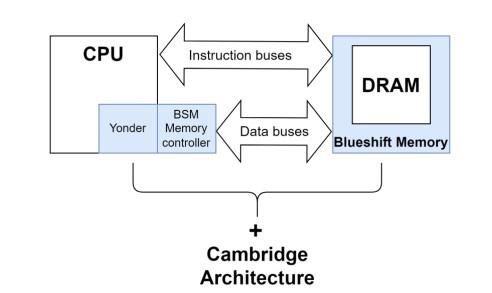

Blueshift’s Cambridge architecture requires Blueshift hardware IP in both the CPU and memory for best results (Yonder is a cache). (Source: Blueshift Memory)

This fundamental architecture change requires Blueshift hardware IP at both the processor end and the memory end of memory buses for best results, though benefits are still seen when applied at one end or the other. The company’s new reference design is a RISC-V memory controller based on an OpenHW core, which sits at the processor end. It works with any processor except CXL-enabled CPUs, and any type of memory (DDR, HBM, MRAM, etc). It can also be used where the amounts of data being moved are too big for traditional cache structures.

An FPGA implementation of Blueshift’s memory controller core sped up STREAM memory-related benchmarks by 50-300× in different scenarios; the company expects another 4× speedup from a future ASIC implementation. Blueshift has also seen improvements to vision AI and Redis database workloads.

New software libraries will be required. Blueshift is working with an HPC compiler company to create C/C++/FORTRAN/Python/R/Javascipt libraries for developers.

Target customers for Blueshift are CPU vendors, AI chip companies and memory manufacturers, Duncan said. One of Blueshift’s benefits is it can boost performance for older generations of memory, saving money and giving competitive advantage to Tier-2 memory makers. The company is currently collaborating with an Asia-based HBM manufacturer on Blueshift-enabled HBM, and with a RISC-V IP provider to integrate its memory controller with their AI inference accelerator core.

The company is also working on quantum-proof encryption with Crypta Labs.