Marvell Meets AI Data Center Needs With 3 nm PAM4 DSP

This week Marvell Semiconductor announced the industry’s first 3-nm, 1.6-Tbps PAM4 DSP.

Marvell Ara PAM4 DSP. Image (modified) used courtesy of Marvell Semiconductor

The Marvell Ara platform comes at a time when data centers are grappling with the growing data transfer requirements of AI and cloud computing. Generative AI models and machine learning workflows are placing enormous strain on existing infrastructure, and innovations in optical and electrical interconnect technologies have become necessary.

Marvell Introduces Ara DSP Platform

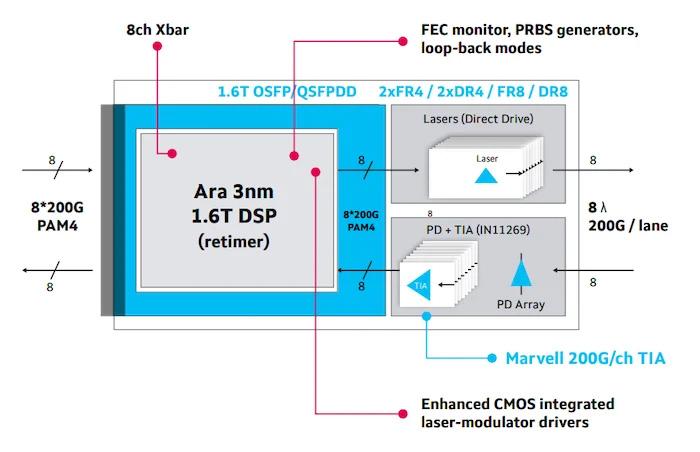

The Marvell Ara PAM4 DSP platform (product brief linked) achieves a total bandwidth of 1.6 Tbps by integrating eight 200-Gbps PAM4 electrical lanes and eight 200-Gbps optical interfaces. It is built on advanced 3-nm process technology, which results in a 20% reduction in power consumption compared to its predecessor. Marvell claims this efficiency supports energy-intensive AI workloads within the constraints of hyperscale data centers. Meanwhile, the solution integrates high-swing laser modulator drivers, eliminating the need for external drivers to simplify design and reduce manufacturing costs.

For interoperability, the platform adheres to IEEE 802.3dj and InfiniBand XDR standards for compatibility in multi-vendor environments. Its concatenated forward error correction (FEC) achieves a coding rate of 226.875 Gbps per channel to guarantee a robust pre-FEC BER margin to accommodate high-volume deployments. The DSP also features real-time diagnostics, including signal-to-noise ratio (SNR) monitoring, eye diagram analysis, and loopback capabilities on both the host and line-side interfaces.

Marvell Ara block diagram. Image used courtesy of Marvell Semiconductor

With support for 1.6T OSFP and QSFP-DD form factors, the Ara platform simplifies connectivity across data centers and enables configurations such as DR8, FR4, and LR8 optical interconnects. It offers 8 x 8 any-to-any crossbar switching for routing flexibility with high-speed I/Os. Furthermore, the platform supports multiple gearbox modes, including half-rate and quarter-rate configurations, allowing backward compatibility with legacy systems.

The Ara DSP’s laser integration and adherence to industry standards position it for high-density, 200-Gbps I/O interfaces used in next-generation switches, NICs, and XPUs.

PAM4 in AI-Driven Data Centers

AI-driven data centers face a mounting challenge: scaling bandwidth to meet unprecedented data transfer demands while maintaining energy efficiency. With the proliferation of generative AI models and machine learning, the volume of data processed has grown exponentially. Training these models requires high-speed, low-latency interconnects that can transfer terabits of data per second across switches, NICs, and XPUs. Traditional binary modulation techniques have become insufficient to meet these bandwidth demands, prompting a shift toward more advanced solutions like Pulse Amplitude Modulation 4-level (PAM4).

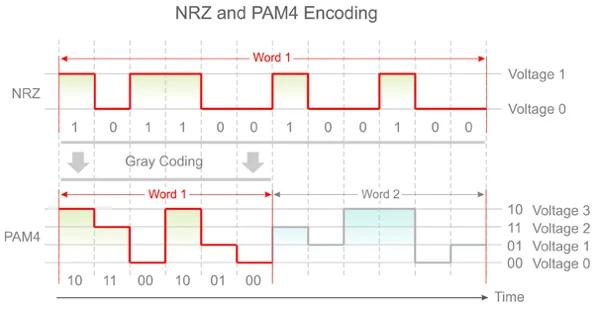

PAM4 modulation is a technique that allows each signal cycle to carry twice the information of conventional Non-Return-to-Zero (NRZ) modulation. By encoding data into four distinct amplitude levels, PAM4 effectively doubles the bandwidth without requiring a proportional increase in signal frequency. However, PAM4 also introduces technical challenges, including higher susceptibility to noise and distortion, which must be addressed through advanced error correction and signal processing.

NRZ and PAM4 encoding. Image used courtesy of Samtec

As with Ara, fine-node process technologies have facilitated the integration of high-swing laser modulator drivers and advanced error correction mechanisms directly into DSPs. These developments address the inherent limitations of PAM4 while ensuring high-performance data transmission at rates of 200 Gbps per lane. Reducing power consumption in optical modules has become a priority, and transitioning to a smaller process makes it tenable.

Supporting Future Data Center Connectivity

As generative AI and machine learning continue to grow, solutions that can meet bandwidth requirements while optimizing power efficiency will define the competitiveness of hyperscale infrastructure. Developments like Marvell Ara could be instrumental in ensuring that networks can handle AI data flows without compromising operational costs or sustainability goals. Ara sampling will be available to select customers starting in 2025.